Harris Mohamed

UIUC 2021 - BS in Computer Engineering

UIUC 2025 - MSc in Computer Science

Creativity is intelligence having fun - Albert Einstein

UIUC 2021 - BS in Computer Engineering

UIUC 2025 - MSc in Computer Science

Creativity is intelligence having fun - Albert Einstein

Hello! I'm Harris. I have a Bachelor's Degree in Computer Engineering and a Master of Computer Science both from the University of Illinois at Urbana-Champaign. I have completed several internships in industry and am currently employed at Kratos Defense and Security Solutions. At Kratos, I apply advanced AI/ML techniques to RF data from our global sensor network to enhance Space Domain Awareness (SDA) areas including but not limited to satellite tracking, signal pattern-of-life, and satcom analysis. In my spare time, I pursue creative and technical efforts, such as filmmaking and vertical farming.

Academic research conducted during my time as a student at the University of Illinois at Urbana-Champaign and professional research carried out throughout my industry career.

AMOS 2025: Expanding Pattern-of-Life capabilities on Passive RF datasets

GSAW 2025: Methods of Detecting Electromagnetic Interference in Passive Radio Frequency Data

GSAW 2024: Monitoring Satellite Pattern-of-Life Changes with Passive Radio Frequency Data

AMOS 2023: Monitoring Satellite Pattern-of-Life Changes with Passive Radio Frequency Data

Sentinel Prime: Real-time LIDAR point cloud FPGA-based 3D classification

Sentinel: Real-time LIDAR Classification System

Personal projects that I am extremely proud of. My best work is encompassed in these projects. Some are dumb, some are failures, but they're all great.

Atlas

The War Room

Vertical Farming

Project Loveless

Custom Pipelined RISC-V Implementation

Eigenmask

Project Hybris

Blender

LC-4 Operating System

CUDA Ray Tracing: GPU-accelerated ray tracing renderer

Project Watchdog

Simple Automated Farming

HackMe: Hackathon-winning proof of concept noninvasive EEG wave capture

Project LD

DAQA Leader for Illini Formula Electric

OCR & Dyslexia

S.T.E.V.E.

Shipping Generator

Internships and Industry positions where I got to apply my academic skills in a professional setting.

Kratos Defense & Security Solutions

Artificial Intelligence Engineer

Kratos Defense & Security Solutions

Software Development Engineer II

NVIDIA

Computer Architecture Intern

Kratos Defense & Security Solutions

System Software Engineering Intern

Kratos Defense & Security Solutions

System Design/Architecture Engineering Intern

Continental Automotive

Embedded Software Intern

Weber Packaging Solutions

Product Systems Intern

View my complete professional resume below or download a copy for offline viewing.

For the best viewing experience on mobile devices, please download the resume using the button below.

ECE 385: Digital Systems Lab

Undergraduate Course Assistant

CS 411 - Database Systems

CS 437 - Internet of Things

CS 441 - Applied Machine Learning

CS 498 - Cloud Computing Applications

CS 598 - Deep Learning for Healthcare

ECE 310/311 - Digital Signal Processing

ECE 385 - Digital Systems Lab

ECE 391 - Computer Systems Engineering

ECE 408 - Applied Parallel Programming

ECE 411 - Computer Organization and Design

ECE 420 - Signal Processing Lab

ECE 448 - Introduction to Artificial Intelligence

I was selected to deliver a plenary presentation on my research into expanding pattern-of-life capabilities for passive RF datasets. The work introduces a multivariate analytical framework that enables more nuanced anomaly detection compared to traditional univariate methods, applied across time-series RF metrics. This is a sequel to the work I presented at AMOS in 2023. Paper | Presentation

I was selected to deliver a plenary presentation on my research into detecting electromagnetic interference in passive RF data. The work develops methods for identifying EMI signatures within passive radio frequency datasets, improving signal integrity and operational awareness in contested electromagnetic environments. Methods explored include 1-shot image segmentation models, K-means clustering, and classical statistical methods. Paper

I gave a presentation on Monitoring Satellite Pattern-of-Life Changes with Passive Radio Frequency Data. This is very similar to my presentation at AMOS 2023. My work can be found at the following link. Presentation

I gave a presentation on Monitoring Satellite Pattern-of-Life Changes with Passive Radio Frequency Data. Here, I explored using statistical, time series, and machine learning approaches for univariate anomaly detection on time series RF datasets. I presented on some anomalies detected that have relevance to the Space Domain Awareness (SDA) mission. My paper can be found at the following link. Paper | Presentation

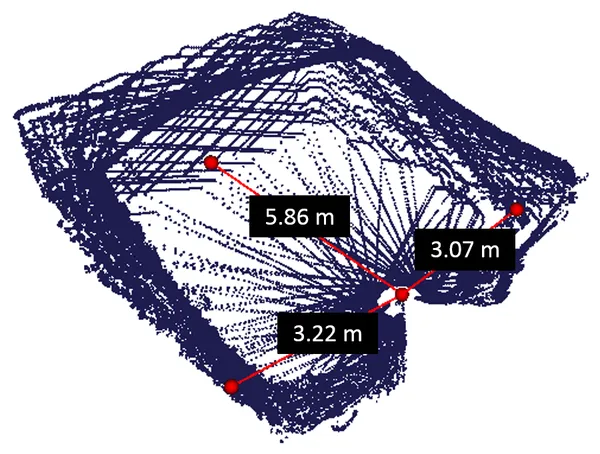

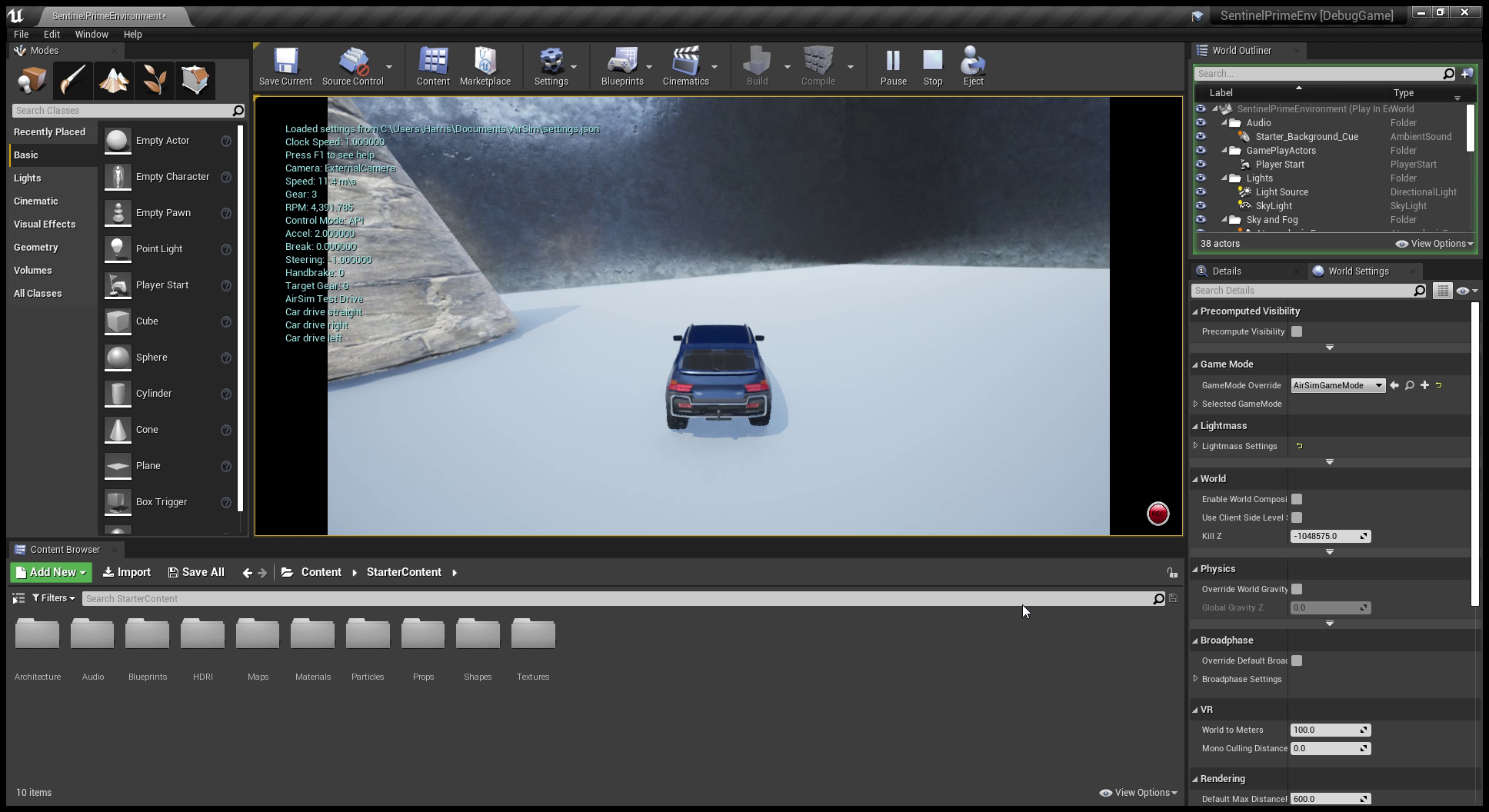

Sentinel Prime was a continuation of Project Sentinel. Sentinel Prime’s goal was to detect and classify 3D objects in indoor environments in real time. Originally, the idea was to pair Sentinel with a camera and use the existing LIDAR sensor, then employ a signal fusion network to combine the data and outperform existing 2D and 3D pipelines. Then, the computation would be performed on an FPGA or embedded device to attain real-time computation. However, I set my timeline a little too aggressively. At the start, my approach was to use an NVIDIA Jetson Nano to run existing KITTI models (KITTI is a challenging 3D dataset, a useful benchmark for 3D object detection and classification). The Jetson Nano actually gave me a lot of grief. The primary issue was the ARM-based architecture, which required me to build several libraries from scratch, which took an extremely long time. In the end, I was only able to get a few models running, and had very little time to actually pair the camera and LIDAR sensor. However, I got to explore the state of the art in 3D classification, including testing of Google’s Objectron model as well as some of the top-performing KITTI models. I also learned a lot about how to allocate time and perform literature reviews. Even though functionally Sentinel Prime was a failure, I learned a lot and will carry this forward in my career.

I also got the chance to test out some virtual simulators such as Microsoft AirSim, which I definitely aim to return to for another project.

Screenshot captured in the middle of an AirSim simulation

Screenshot captured in the middle of an AirSim simulation

Professor Deming Chen

Sentinel is a real-time LIDAR point cloud classification system developed as part of my undergraduate research. The system leverages FPGA acceleration to achieve real-time performance for autonomous vehicle applications.

The system was successfully demonstrated on a test vehicle and showed promising results for real-time object detection and classification in urban environments.

Atlas is a Discord-based AI council system where multiple LLM officers collaborate to strategize, brainstorm, and evaluate ideas. Right now, Atlas can spawn officers with multiple personalities to answer questions and conduct basic research on tasks. I want to grow this out to have the officers able to implement simple POCs using headless Claude Codes.

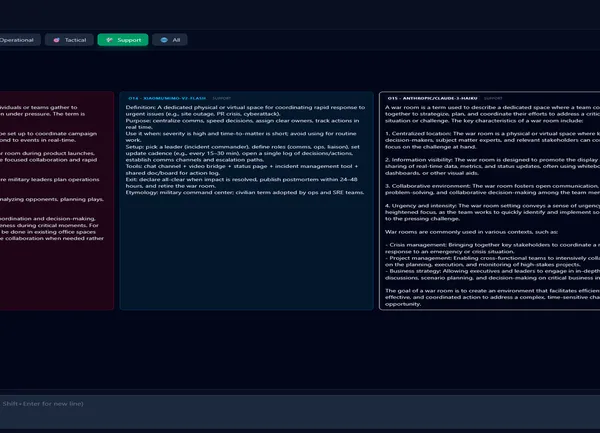

The War Room is an innovative multi-LLM (Large Language Model) interface designed to facilitate comprehensive AI-powered analysis by enabling simultaneous queries across multiple AI models. The project aims to provide users with diversified opinions and perspectives on any given question by creating a “council of LLMs with different personalities.”

The War Room leverages modern web technologies to create a unique AI interaction platform. By using OpenRouter API, the application can query multiple AI models simultaneously, allowing for comparative analysis of different AI reasoning styles and strengths.

The project demonstrates an experimental approach to AI consultation, providing users with a multi-perspective analysis tool that goes beyond single-model interactions.

Detailed project documentation and live demo available in the GitHub repository.

After my work for basic automated farming, I became really interested in how this could be applied to vertical farming. Check back here for updates on how I attempt to implement vertical farming at a global scale to revolutionize how food distribution and production works.

I recently downloaded and began visualizing some of my Facebook Messenger messaging data, which led me to consider what an AI would think of my messages. This project will use AI to analyze intent, emotion, mood, and predict future messages.

Originally, I intended to revisit this project. However, the advent of mature LLMs actually led me to abandon this project. I might return to it at some point, but as of 2026 this is abandoned.

The RISC-V pipelined CPU is the capstone assignment in ECE 411: Computer Organization and Design. This is an intensive course that teaches fundamentals in computer architecture and then those fundamentals are designed in System Verilog. Working in a group with 2 others, we designed a 5-stage 32-bit classic RISC-V processor that could perform hazard detection and data forwarding.

I played a significant role in implementing the base CPU and had the chance to augment the CPU with the RISC-V M extension, which supports multiplication and division operation. I decided to use the well-known Wallace Tree to achieve a faster multiplication than an algorithm like add-shift. For the divider, I used a shift-subtract algorithm.

Although I enjoyed this course, I feel that I could accomplish a lot more with more time. I am planning to revisit this CPU design and augment additional features, such as a tournament branch predictor, multiple-way L1 caches, and . My ultimate goal is to program an FPGA with this CPU and run actual code on it. I might even pursue Tomasulo’s algorithm, as that algorithm makes much more sense to me than a pipeline. Due to academic integrity violations, I can’t upload the CPU from my class but I will update my GitHub if I make something else not in a class.

This was the final project for ECE 420, Signal Processing Lab. The final project is to take any signal processing application described in a paper and implement it on an Android app. I worked with a partner to create Eigenmask: an application of Eigenfaces with the purpose of distinguishing not only who the person is, but whether or not they are wearing a mask.

The Eigenface algorithm works by finding the eigenvectors from the covariance matrix of the probability distribution over the extremely high-dimensional vector space of face images. This provides dimensionality-reduction, and unknown faces can be classified by comparing them to the eigenvectors determined in training. Training was done on Python, and this was ported over to Android. The project was a success, able to distinguish a few friends and I, as well as whether we were masked or maskless.

I’ve always had an interest in using AI for text generation. One of my friends is a part of a website called Hybris Forum where they publish articles discussing technology, culture, and the dysfunction of technology with society. That same friend suggested I write an article as well, but writing it definitely not my strong suit. This was the perfect opportunity to experiment with text generation AIs. The current state of the art is GPT-3, a transformer network developed by OpenAI. Unfortunately, GPT-3 is not available to the public, because the text it generates is too realistic to that written by humans.

Rather than go through the effort of training my own network, I decided to use GPT-2. The creators have open sourced a smaller version of GPT-2, which implies that it is bad enough to be given open access to, which is something I found out firsthand. The way I generated the article was by writing a long essay, and then finetuning the 355M model of GPT-2. After this, I generated lots of output text with input prompts. It was pretty painful to sort through the outputs and get something usable, and after spending a few days I didn’t have enough text for an essay. Out of frustration, I turned to InferKit, a working demo of GPT-2. This was useful for the transitions in the essay.

Check my Git to find the final Colab notebook I used. Unless GPT-3 gets released, I won’t be pursuing this project any further. I would honestly call this a failure, but the final essay (also on my Git) is pretty coherent.

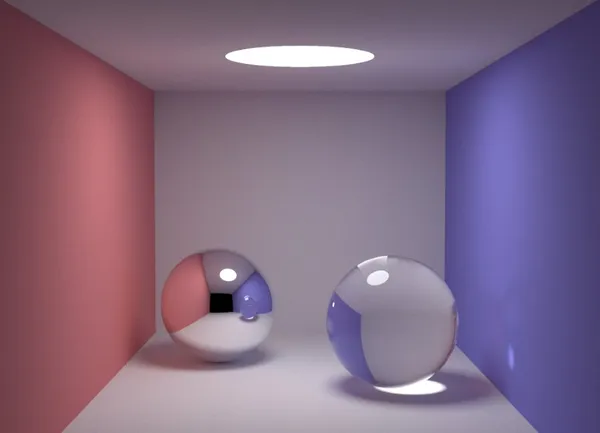

I have always had an interest in 3D design, modeling, and rendering (very much reflected by my research interests). I’ve been learning Blender ever since I built my new desktop. I am still a novice, but I have a few intro projects I have completed, and have many more planned. I upload all my renders on my VFX YouTube channel, Ethereal Studios.

More coming soon! I plan to experiment with physics simulations and video compositing.

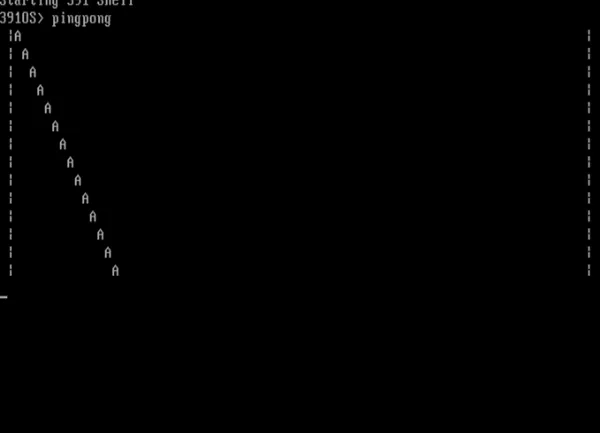

This was the final project for ECE 391, an intensive design course where I worked with a group of 4 to write code to design a unix-like operation system. This operating system (dubbed LC-4 after our university’s toy ISA, LC-3) featured device drivers (for terminal, file system, interrupt controller, real-time clock), support for system calls, context-switching, virtualization, and a round-robin scheduler.

The following are some details that I worked on to implement this OS.

Device Drivers

System calls

Context-switching

Scheduling

One of my group members uploaded a video of our final OS here.

For the final project in ECE 408 (Applied Parallel Programming), I implemented a ray tracing renderer using NVIDIA CUDA to leverage GPU parallelization for significant performance improvements.

Compared to a single-threaded CPU implementation:

ECE 408 - Applied Parallel Programming focused on heterogeneous parallel programming with emphasis on GPU architectures and CUDA programming. This project showcased the performance potential of GPU acceleration for computationally intensive graphics tasks.

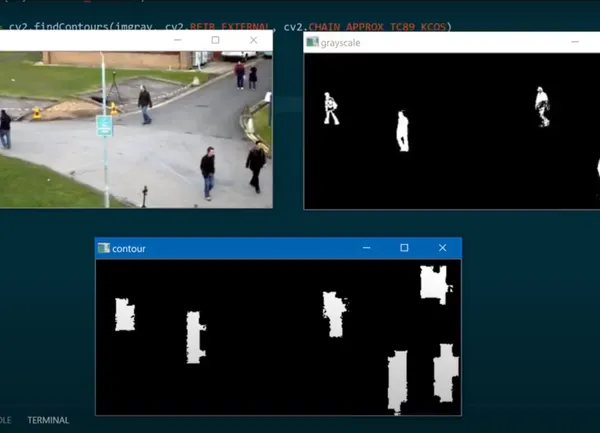

innovateFPGA is a competition hosted by Terasic, where FPGAs are applied towards problems that involve AI at the edge. In this vein, my colleague and I submitted a proposal for Project Watchdog, an FPGA-based smart home security camera that performed all classification locally and only sent the output of the classification to a computer, thus reducing the amount of bandwidth needed to send video to an external server. In addition, this solution does not need an internet connection to perform a classification.

We placed as regional finalists in the competition, and our full write-up can be found here.

For my internet of things class, I designed and implemented an automated farming setup. I grew lettuce, tomatoes, and strawberries indoors. The water delivery was automated, and the plants were monitored with a real-time dashboard using several sensors (temperate, soil moisture, etc.). A camera was set up to capture a time lapse. A video summarizing my conclusion can be found at the link below:

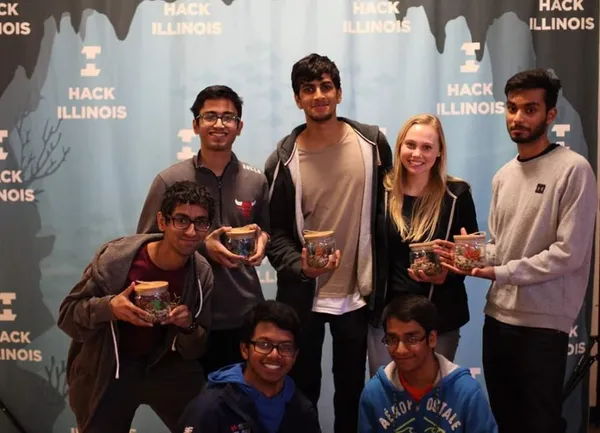

Every year, UIUC holds a competition called a Hackathon. The hackathon is a 36-hour competition where the competitors are asked to design (usually code) anything they can think of or find interesting. The goal is to create the most novel and working design in 36 hours. Since I was in an ECE fraternity (HKN), I wanted to create something with hardware to challenge myself, and also help me with job applications for internships.

The idea is novel, but is a bit ambitious for a 36 hour competition. We set out to develop a non-invasive EEG wave capture device that could map thoughts. Using affordable hardware components and machine learning algorithms, we created a proof-of-concept system that could detect specific thought patterns.

The project involved:

Winner of the HackIllinois 2019:

This project demonstrated the potential of accessible brain-computer interfaces and opened doors for future research in this area.

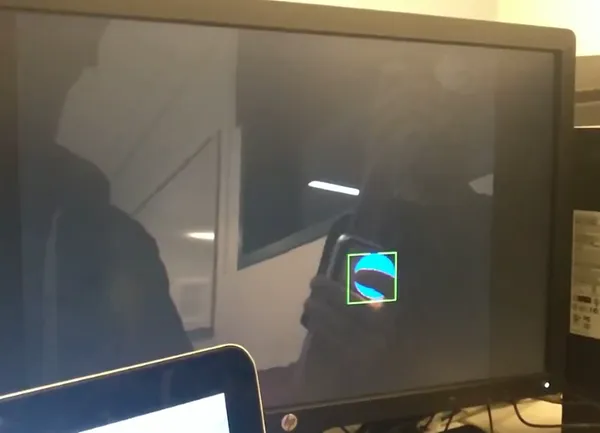

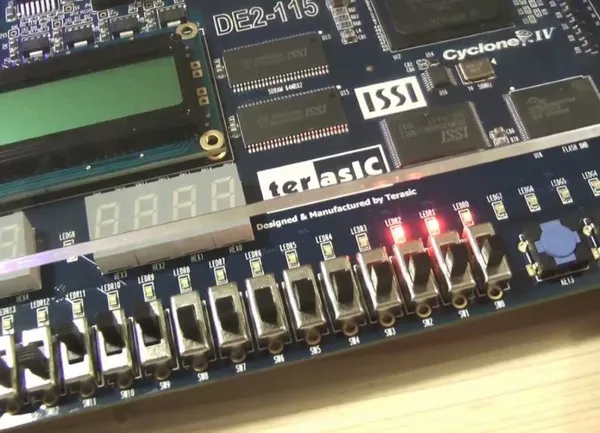

This was our final project for ECE 385, an introductory course about realizing digital designs on FPGAs. The project is named “Project LD”, which is short for “Projects Legends Never Die”, which is a nod to our professor, whose profile picture on our class website is the “Legends Never Die” meme.

This project was originally meant to be augmented reality beer pong (without the beer). The goal was to track a bouncing ping pong ball using a camera in 3-dimensions in realtime and then superimpose the path of the bouncing ball on the video feed as well as superimpose the path that should have been taken to get the ball into the cup. We were able to get a successful 2D track of the ball’s position and scale, but did not have time to work on the 3D track. For this project, we used the DE2-115 expansion board with the Altera Cyclone IV E FPGA. We were provided with the 1.3 megapixel camera module, and spent a majority of the project interfacing the camera with the FPGA. By the end of 2 weeks, we were able to have a fully functioning 1.3 megapixel camera outputting its feed via VGA to a computer monitor. In addition, we were able to interface the NIOS II E microcontroller as a System-on-chip to toggle the camera settings. We made heavy use of exposure, red gain, blue gain, and green gain settings to achieve the best picture quality we could. The tracking was done very similar to how the visual effects software Adobe After Effects achieves its 2D motion track: In After Effects, you choose the point you want to track, and it generates a small box on the screen. Frame-by-frame, it then tracks the change in position and scale by looking at the change in high contrast points within the box. We made an FPGA-module to do the exact same thing, except in realtime. It worked rather well, but could not keep the track if the ball was dropped. The reason for this is we need to add a module that accomplishes frame interpolation. Since a falling ball falls very fast relative to the frame, comparing the frame before the drop to the frame after the drop will show a large vertical change between frames. Frame interpolation will guess where the ball would have been between the frames, and would allow for a much better track.

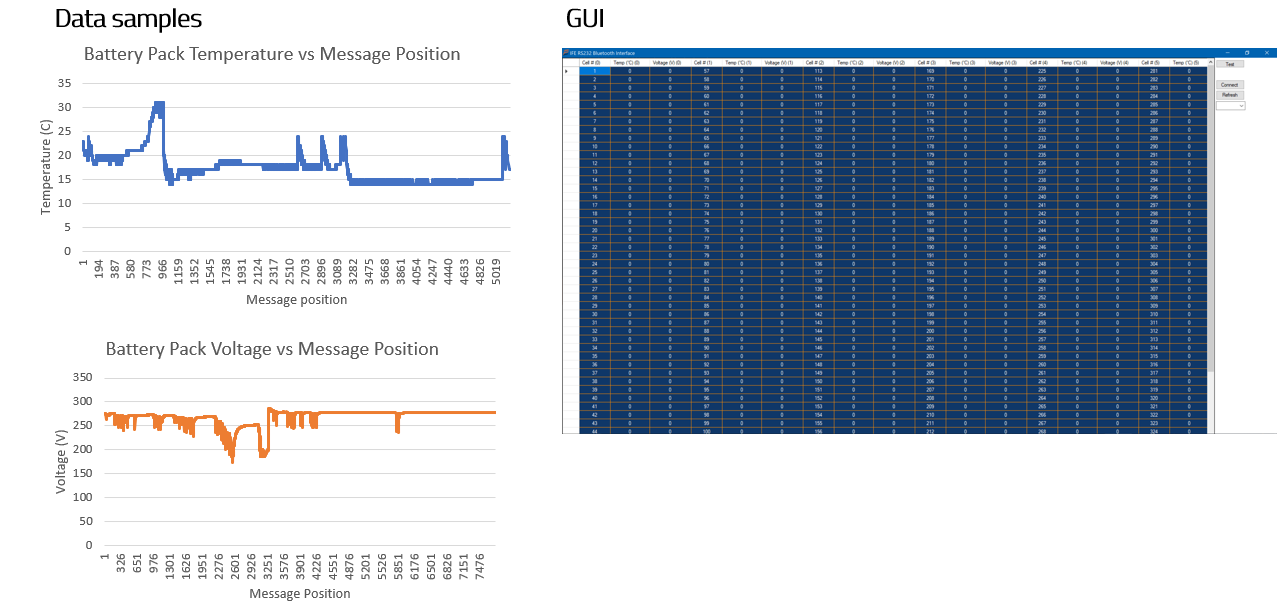

Illini Formula Electric (IFE) is a club at UIUC where we design and build a formula-style electric racecar from scratch. The club is entirely student-run. In the summer we compete at the FSAE competition in several events. I was a member of IFE for two years, detailed below.

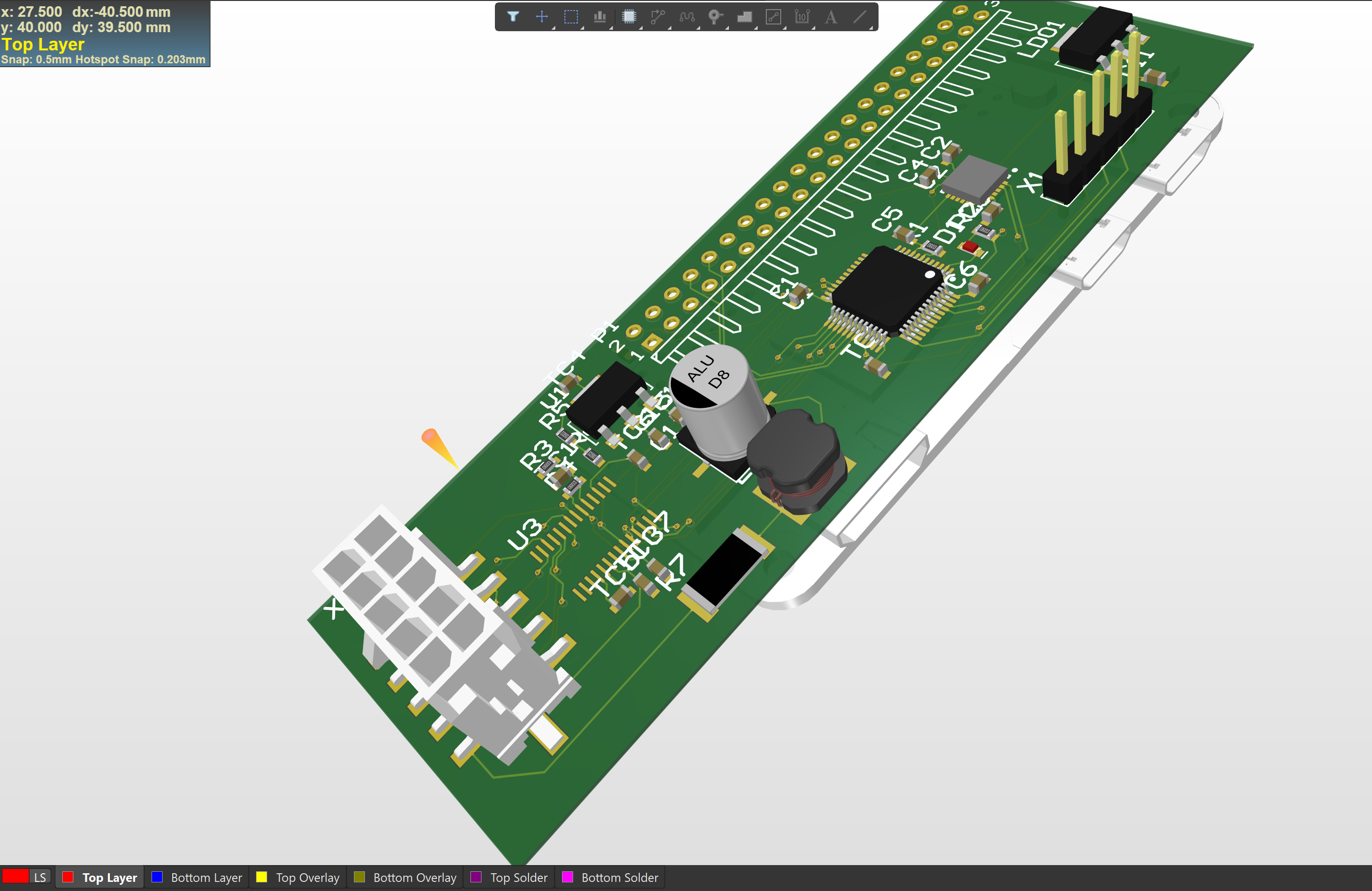

We were limited to 300V (that limit has since be raised to 600V). The battery that our car runs off of is comprised of 336 LiFePO4 cells that are wired together in a series-parallel combination that yields 300V and 175A overall. The competition rules require us to monitor the temperature and voltage of each battery cell. The old method of doing this was using the Elithion Proprietary Battery Management System (BMS). This system only runs on very old team laptops, which are archaic, cumbersome, and limited to a hardwire connection. As a freshman, I designed a PCB that could read data off the CAN bus and then output it over bluetooth to a laptop.

I also developed a GUI that was capable of outputting the data in realtime. This was done in C# using the .NET framework.

After my first year on IFE, I got promoted to the leader of the Data Acquisition and Quantitative Analysis (DAQA) team, whose primary goal is to log data from all the sensors on the car, timestamp them, and then upload it to a server. It gathers data from 4 accelerometers, 30 strain gauges, a GPS, the position of the steering wheel, the coolant temperature, the brake pressure, the throttle and regen pedal potentiometer, CAN, hall effect sensors, and the voltage of the low voltage battery pack.

The system consisted of Raspberry Pi Zero boards with a custom PCB on top of each Pi. There would be one board near each tire and one in the center, by the driver’s seat, which would distribute the data evenly across each board. Each Pi would then send data to the central pi, which would then timestamp and upload the data. The data would then be parsed and analyzed using AWS. Due to time constraints and hardware issues, the final system did not work as intended. However, it was a valuable learning experience.

As part of the 2018 ECE PULSE competition, me and 3 other group members interfaced a Tobii eye tracker with a C# application to assist those with dyslexia. This would operate by using OCR to analyze when someone is trying to read text on a computer screen and based on how long someone is looking at text, analyze if they are having trouble reading it. Then, it can take the data and then graph it. Due to time constraints, we were only able to get the eye tracker data and figure out what parts of the screen were being looked at. We were 2nd place runners up in the competition.

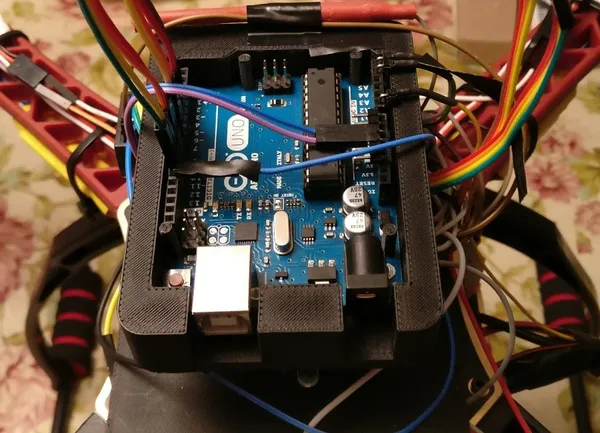

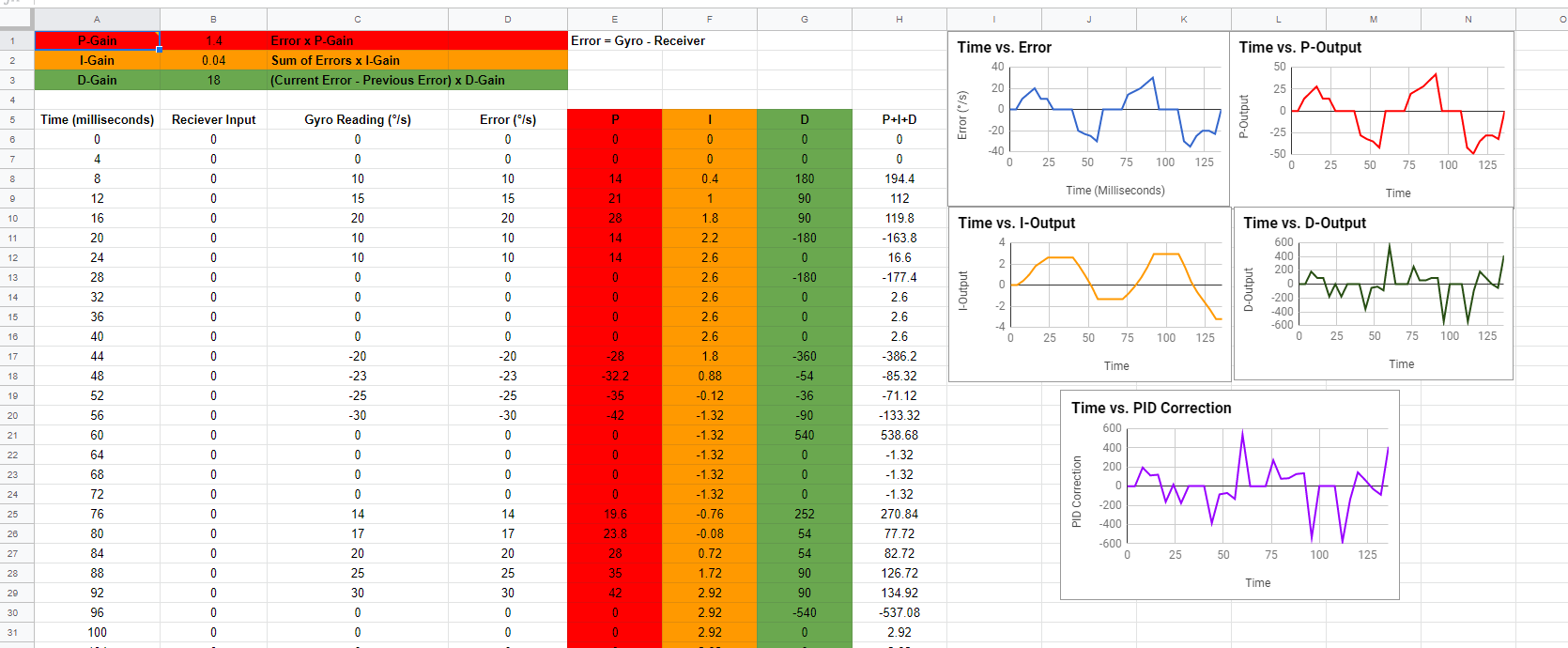

S.T.E.V.E. (which stands for Self Teaching Elevated Vehicle Entity), is a drone designed from scratch that was designed to serve as an aid during natural disasters. S.T.E.V.E. was designed for Senior Tech, which was a high school capstone course where we could work on any STEM related project for a year. The concept was to create an autonomous drone capable of surveying locations where individuals stranded in dangerous situations could be without risking the life of an emergency responder. To accomplish this, we made a fully featured drone with a custom flight controller, camera to view the surroundings (to live stream what the environment looks like back to a VR headset at the base station), a speaker with an onboard AI (to communicate to a victim of a natural disaster), and sensors (to make sure it doesn’t fly into any obstacles as well as update location). Even though we were extremely ambitious and did not meet all of our goals, this project was ultimately a success. The project was met with high praise and was presented at a limited engineering expo at Navistar. This is also the project that made me realize I wanted to be a Computer Engineer, as this project was worked on during the time in which I applied to colleges. Working on this project made me realize my love for combining hardware and software to produce something greater than the sum of its parts.

A crucial part of any drone is the flight controller. The flight controller is responsible for controlling the speed of each motor to Most drone projects will use an inexpensive off-the-shelf flight controller that takes care of all the flight algorithms. All you have to do is plug in the motors and battery to have a functional drone. However, we thought it would be better as a learning experience if we designed and programmed the flight controller from scratch. We used an Arduino MEGA as our primary microcontroller and an inexpensive MPU-6050 as our Inertial Measurement Unit (IMU). We then progreammed a PID controller to realize our flight controller. This took a bulk of our school year (and grew into a thousand line plus program) but was an incredibly rewarding experience. The microcontroller was programmed in C/C++ using the Arduino IDE.

The goal here was to come up with a way to stream camera footage from the drone back to a virtual reality headset. This could be used to allow emergency responders to remotely survey a disaster area without the need to send in personnel. From an engineering standpoint, it is an interesting feature to try to implement. We used a Raspberry Pi 3 as the computer and Raspberry Pi Camera for the camera. There were two reasons we went with this combination: (1) Raspberry Pis (and their cameras) are cheap, easy-to-use and well documented, and (2) This allowed to split the hardware for the project into two categories: flight essential and not flight essential. One of the most important factors optimized in a flight controller is how long the main loop of the code takes. The faster (typically) the better. By separating the hardware into what was essential for flight and what wasn’t, we could could aggressively optimize the flight controller and still have other devices on the drone. If we tried to get the Arduino to compute and transfer the camera footage, the flight loop might get severely impacted and the drone would no longer fly smoothly.

This is also not flight essential hardware and was supported by the Raspberry Pi (this was made simple by the fact that there is an audio out jack on the Pi). The main goal here was to give STEVE a natural language artificial intelligence so that STEVE could talk to people and let them know help is on the way. The initial thought was to use simple if-else constructs and hard-code the speaker outputs (i.e. “Help is on the way”), but then we had the idea of using IBM AI APIs to get a natural language output from the Raspberry Pi. After struggling for a few weeks with the IBM documentation, we contacted them and they sent us a sample Android app that used their APIs. After this, it was easy to link this with the Raspberry Pi and output it through the speaker. We trained the AI and attempted to make the outputs as helpful as possible in the relevant situation.

One fundamental aspect of a drone is to not fly into nearby objects. Real drones use laser-detection to detect nearby objects, but those sensors are expensive and difficult to get working so we chose to use inexpensive ultrasonic sensors. Our end goal was to have 6 sensors in each axis, 3 in each direction (for instance, we would have 3 pointing directly upwards and 3 pointing directly downwards, thus covering the z-axis). We used a MUX shield, which adds 37 additional inputs to the Arduino and we ended up writing our own custom drivers to capture the ultrasonic values (it turns out provided library functions start to fail when you try to use an external shield). The driver code worked perfectly fine, but due to time constraints we were not able to interface it with the rest of the drone. We were able to add an off-the-shelf GPS module, which was capable of logging the drone’s coordinates. Combined with the ultrasonic sensors, we were able to show that we could capture coordinates in 3-dimensions.

Even though we were not able to meet all of the goals we set for the project, we consider STEVE an incredible success.

The first programming language I learned was Javascript. From there, I use Codeacademy to learn HTML and CSS, and I was cranking out simple, unaesthetic webpages like nobody’s business. During high school, a trend that took off (and is still extremely relevant today) is “shipping” people together. “Ship” is short for relationship, and it essentially means pairing people together as couples. This idea led directly to the Shipping Generator. We loaded everyone’s name from our classes, and everytime “Generate” was clicked, a boy’s name and a girl’s name would be chosen at random. Incredibly simple, but the project took off and soared in popularity. The generator got hundreds of page requests a week, a lot of which was actually during class time, since the website was not blocked by the firewall. People began asking for more features, the most prominent of which ended up being a leaderboard. Designing a leaderboard in high school with no concept of what databases were was actually a difficult task, but we ended up having the website complete a Google Form and then had a public readonly spreadsheet where we sorted the results. This led to people figuring this out and filling out the forms themselves, which led to us adding in obfuscation and security features. While rudimentary, still one of my favorite projects that I have worked on.

Artificial Intelligence Engineer at Kratos Defense & Security Solutions in Colorado Springs, CO, focusing on developing advanced machine learning pipelines for Space Domain Awareness (SDA) operations and deploying secure AI infrastructure for engineering teams.

Advanced expertise in unsupervised machine learning and clustering techniques for large-scale RF data analysis. Gained deep experience in secure AI infrastructure deployment, including LLM integration with cloud services while maintaining strict security and compliance requirements. Developed proficiency in autoencoder-based feature extraction and density-based clustering algorithms for anomaly detection in satellite communications.

Enabled automated anomaly detection for Space Domain Awareness operations, significantly reducing the need for manual pattern analysis of satellite behavioral data. Empowered engineering teams with secure AI-assisted development capabilities through compliant LLM infrastructure deployment, maintaining data sovereignty while enhancing productivity.

Supporting multiple engineering teams across Kratos Defense & Security Solutions by providing AI infrastructure and tools. Collaborating with SDA operations teams to develop machine learning pipelines that enhance satellite monitoring and analysis capabilities.

Software Development Engineer II at Kratos Defense & Security Solutions, focusing on building scalable data infrastructure and developing analytics prototypes for Space Domain Awareness operations. Worked with the Kratos Global Sensor Network to support mission-critical assessments through data engineering and collaborative analysis.

Advanced data engineering expertise in building and maintaining large-scale ingestion pipelines using Dagster for mission-critical Space Domain Awareness operations. Developed strong capabilities in distributed systems troubleshooting across edge computing infrastructure. Enhanced analytical prototyping skills for RF data analysis, including bandwidth utilization, satellite maneuver detection, and interference identification. Strengthened stakeholder communication by regularly presenting technical proof-of-concept work to senior leadership and translating complex findings into actionable operational insights.

Enabled stable, large-scale data ingestion into a 65M+ row Data Lake by resolving critical failures across 20 edge nodes, supporting high-performance querying for Space Domain Awareness operations. Empowered mission-critical assessments through analytics prototypes that identified bandwidth utilization patterns, detected satellite maneuvers, and flagged RF interference. Drove organizational alignment by translating proof-of-concept analyses into operational insights for engineering, operations, and customer teams at the Kratos Global Sensor Network.

Collaborated extensively with cross-functional domestic and international teams to develop and deliver analytics prototypes for Space Domain Awareness. Regularly interfaced with senior stakeholders to present technical findings and drive decision-making. Worked across engineering, operations, and customer teams to ensure alignment on data infrastructure and analytical capabilities for the Kratos Global Sensor Network.

I worked on a GPU simulator written in C++, profiling the simulator using Visual Studio and optimizing the simulator to perform more efficiently.

System Software Engineering Intern at Kratos Defense & Security Solutions during Summer 2020.

System Design/Architecture Engineering Intern at Kratos Defense & Security Solutions during Summer 2019.

Embedded Software Intern at Continental Automotive during Summer 2018.

Product Systems Intern at Weber Packaging Solutions during Summer 2017.

Served as an Undergraduate Course Assistant for ECE 385: Digital Systems Lab, helping students with FPGA programming using SystemVerilog and providing guidance on digital design projects.

CS 411: Database Systems provides comprehensive coverage of modern database technologies, from traditional relational databases to NoSQL and graph databases. The course covers both theoretical foundations and practical implementations.

CS 437: Internet of Things explores the fundamental concepts and technologies behind IoT systems. The course covers everything from low-level network protocols to cloud-based IoT platforms, with hands-on experience using AWS services.

Automated farming system demonstrating end-to-end IoT implementation, from sensors to cloud-based data processing and control.

CS 441: Applied Machine Learning provides a comprehensive introduction to machine learning algorithms and their practical applications. The course covers both classical machine learning techniques and modern deep learning approaches.

CS 498: Cloud Computing Applications provides comprehensive training in modern cloud computing technologies and architectures. The course focuses on building scalable applications using industry-standard cloud platforms and big data processing frameworks.

CS 598: Deep Learning for Healthcare covers advanced deep learning techniques applied to healthcare and medical data. The course provides comprehensive coverage of modern deep learning architectures and their applications in healthcare domains.

Transfer learning used to classify what activity is being performed based on smartwatch metrics. The project demonstrated practical application of deep learning techniques to wearable sensor data for activity recognition.

ECE 310: Digital Signal Processing covers the theory of Digital Signal Processing. The course was heavy in mathematics, but the concepts from this class show up in several places: the lab, internships, and real-world applications. The associated lab section (ECE 311) used Python to visualize many of the concepts taught in the classroom.

The lab section provided hands-on experience with DSP concepts:

ECE 385: Digital Systems Lab covers several topics that are important to the field of programming and using FPGAs. Similar to parallel programming, impressive speedups can be achieved using FPGAs. This was one of the favorite courses, providing hands-on experience with FPGA development and digital system design.

Implemented a match moving algorithm using:

ECE 391: Computer Systems Engineering is all about the synthesis between hardware and software, working together to make a Linux-like operating system. This starts from nothing more than a few boot files. The final operating system has a read-only filesystem, interrupt support, device support (Keyboard, Interrupt Controller, Real time clock), paging, and a round-robin scheduler for multitasking. The operating system was completed in a group of 4.

Built a complete Linux-like operating system from scratch featuring:

ECE 408 focuses on heterogeneous parallel computing with emphasis on GPU architecture and CUDA programming. The course covers:

ECE 411: Computer Organization and Design provides hands-on experience in computer architecture through the design and implementation of a complete RISC-V CPU. The course covers the fundamental concepts of computer organization and design from the ground up.

Implemented a complete RISC-V CPU in SystemVerilog with:

ECE 420: Signal Processing Lab provides hands-on experience implementing signal processing algorithms on mobile platforms. The course focuses on real-time signal processing applications using Android development.

Developed Android applications implementing:

ECE 448: Introduction to Artificial Intelligence provides a comprehensive introduction to the fundamental concepts and techniques of artificial intelligence. The course covers both classical AI methods and modern deep learning approaches.